“Better have your baby before you’re 37” is advice that Professor Kui Liu of the Department of Obstetrics and Gynaecology likes to give his students in a light-hearted manner. The line draws a laugh but everyone in the room knows that this statement is no joke.

A woman’s eggs start to deteriorate in both quantity and quality at age 37, regardless of her ethnicity. Sperm quality also deteriorates as men age, particularly the quality of the DNA they pass to their offspring, although this process has not been pinned to a particular age.

Professor Liu and his colleague, Dr Philip Chiu Chi-ngong, have been researching the problem of egg and sperm quality in human reproduction and their findings offer new insights that point to paths of artificial insemination and other benefits.

Professor Liu’s work is focussed on egg quality. He has been looking at the cell-cycle regulation of eggs, in particular the primordial follicles that produce eggs. One problem in infertility is that the ovarian follicles are not big enough and cannot respond to the hormone stimulation provided by in vitro fertilisation (IVF). In fact, IVF usually only results in conception for about 25 to 30 per cent of women.

“Our approach has been to look inside the molecular network and, instead of hormones, apply chemicals or drugs downstream at the lower molecular factors and try to trace the growth of a normal egg,” he said.

![]() Our approach has been to look inside

Our approach has been to look inside

the molecular network and, instead of hormones, apply chemicals or drugs downstream at the lower molecular

factors and try to trace the growth of a normal egg. ![]()

Professor Kui Liu

Moment of conception

This work led to Professor Liu identifying signalling pathways involved in activating primordial follicles and developing a new treatment for female infertility called in vitro activation (IVA). So far, the method has resulted in 27 babies being born to infertile women but he said the success rate is still low – one study in Tokyo resulted in two babies from 36 women. Other trials are underway in China, Spain and the US.

“Theoretically we are targeting the correct pathway but I believe the right drug has not been found yet. I hope we can do this within the next 10 to 15 years,” he said. Professor Liu recently joined HKU from Umeå University in Sweden and finding the right drug will be the major focus of his research here.

Dr Chiu has been studying sperm quality and the actions of the sperm at the moment of conception. For sperm to successfully fertilise an egg, they must bind with the matrix that surrounds the egg which is made up of carbohydrates and proteins. Dr Chiu was the first to identify the carbohydrate chain that mediates the interaction between the sperm and egg, called the sialyl-Lewix X (SLeX) – a finding that has spurred research on male contraception, sperm quality and forensic evidence collection for sexual assault cases.

On male contraception, Dr Chiu has collaborated with the University of Georgia in the US to synthesise a glycan that can carry SLeX and bind with sperm. “This molecule has very high affinity with the sperm and we are now looking at whether we can develop it into a male contraceptive that would block the binding of SLeX on the human egg surface with the sperm,” he said.

Services in demand

He is also working with large IVF centres in China to collect a large number of semen samples, both normal and pathological, to identify markers that can predict the fertilising ability of sperm before they are used for IVF. This work could potentially also identify the quality of the sperm in terms producing

healthy babies.

The research on forensic evidence collection has come from an independent study at Stanford University that used Dr Chiu’s finding on SLeX to drastically reduce the time needed to collect sperm for sexual assault cases from eight hours to 80 minutes. The sperm can provide the DNA of male attackers but it must be of very high quality and can become easily contaminated with the existing procedures.

Professor Liu and Dr Chiu see growing demand for studies like theirs because couples are starting their families at older ages. In Hong Kong, for instance, the median age of women at first childbirth was 31.4 in 2015, according to the Census and Statistics Department – up from 29.4 in 2001 – meaning half of all women had children older than that age. Leaving pregnancies until later in life will only increase fertility problems. In addition, the change in Mainland China’s one-child policy in 2016 to allow couples to have two children has enticed older couples to try for another child.

“Many older women are thinking of having other children and we hope to help them,” Dr Chiu said.

RAISING THE ODDS OF CONCEPTION

About 10 to 15 per cent of couples have difficulty conceiving babies. Research at HKU on sperm and eggs is aiming to improve their chances of success.

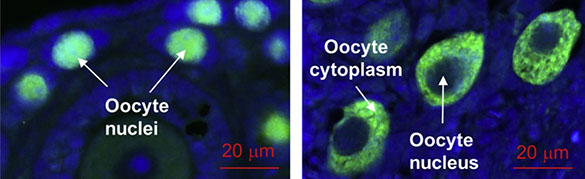

Upon activation from small primordial follicles (left) to primary follicles (right), the transcription factor FOXO3a shuttles from the nuclei of the oocytes (left, green signal) to the cytoplasm of the oocytes (right, green signal).

Home

May 2019

Volume 20

No. 2

Cover Story